Paul Starke

AI Animation Engineer Game Developer Research Engineer Gamer

I'm a Research Engineer at Meta Reality Labs working on data-driven motion synthesis. Prior to Meta, I worked as a ML Engineer at Electronic Arts and have 4+ years of industry experience in AI/ML engineering, graphics, and vision. I completed a M.Sc. and B.Sc. in Informatics and my recent work on motion synthesis has been published on scientific venues and presented at industry media releases.

Animation

Neural Motion Generation, Inverse Kinematics, Character Controllers, Motion Matching, Motion Phase Alignment, Mocap Visualization, (Hand) Object-Interaction, Body Tracking

Programming

C#, Python, PyTorch

Technology/Tools

Unity3D, Unreal Engine, LaTeX, Blender

Artificial Intelligence

Deep Learning, AI-assisted coding

Projects

AI4AnimationPy

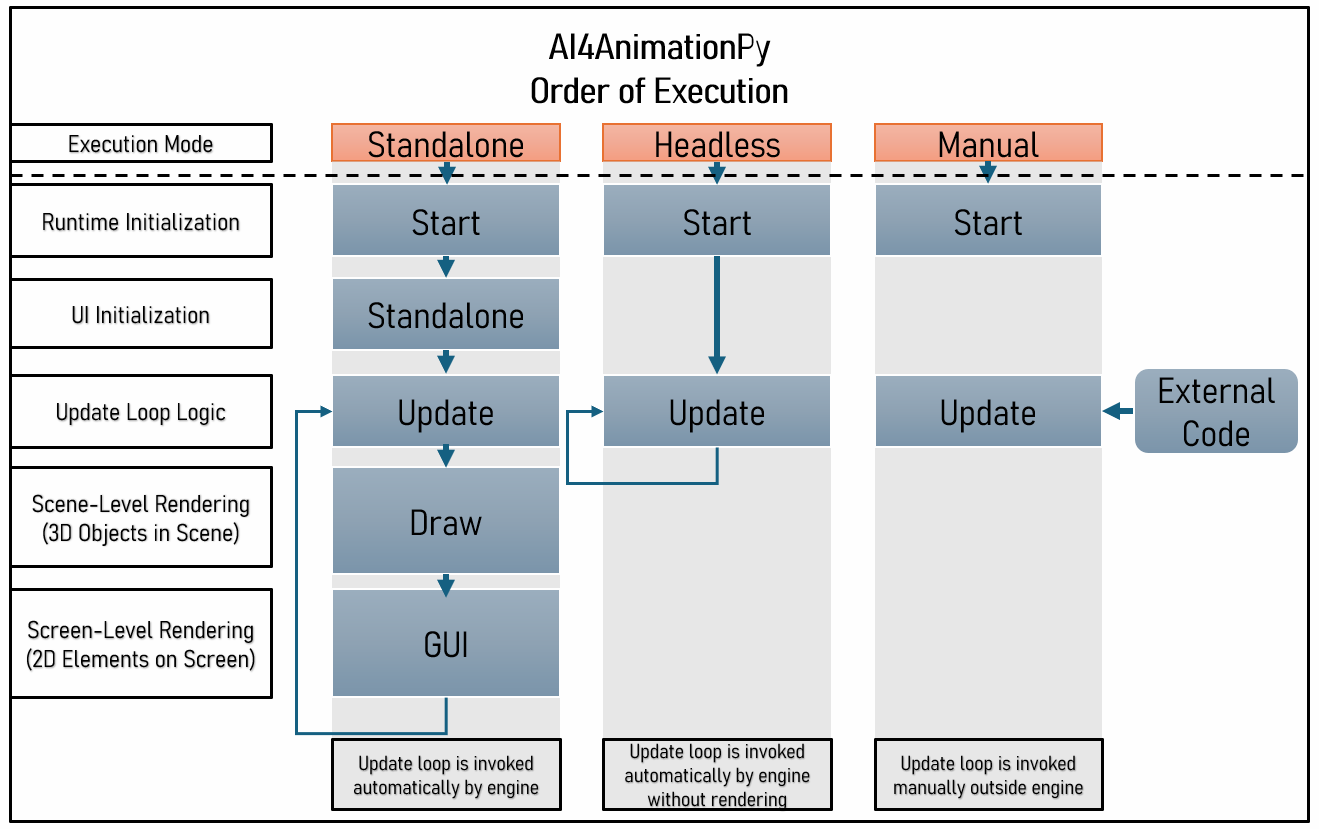

Meta · Open Source · 2026AI4AnimationPy is a Python framework for AI-driven character animation that unifies motion capture processing, model training, inference, and real-time visualization in a single environment.

The framework runs entirely on NumPy and PyTorch, removing the original AI4Animation workflow’s dependency on Unity for data processing and experimentation while preserving its familiar ECS-style architecture, update loops, and rendering pipeline.

Try the interactive browser demo below, or open it in a new tab.

Demos

-

Stylized Locomotion — neural network driven locomotion and style control.

-

Training Toy Example — minimal learning setup for understanding the training workflow.

-

ECS — entity hierarchy with custom components and lifecycle-driven structure.

-

Training Future Motion Prediction Example — interactive training visualization.

-

Actor — load and display a skinned character model.

-

Motion Import (GLB/BVH/NPZ) — import and inspect motion capture assets from common research formats.

-

Motion Editor — browse, and visualize animation data and motion features interactively.

-

Inverse Kinematics — interactive real-time IK solving.

Architecture

AI Emotes for Meta Horizon

Meta · Horizon · 2025AI Emotes is a shipped feature that lets users create personalized full-body avatar emotes from videos. Users can record a video of themselves, or select from stock videos, and generate a custom emote for their avatar directly on Horizon iOS.

The pipeline runs through video-based body tracking system, where the recovered motion is refined by a motion model before being applied to the user's avatar. The motion model makes the system more robust against foot sliding, jitter, body drift, and ground penetration artifacts by projecting noisy input motion onto a learned motion manifold trained on high-quality mocap data.

AI Emotes is live on Horizon iOS and has been used by millions of users to create personalized emotes for their avatars. The technology has also been applied to other features within Horizon, such as avatar animation for virtual reality experiences.

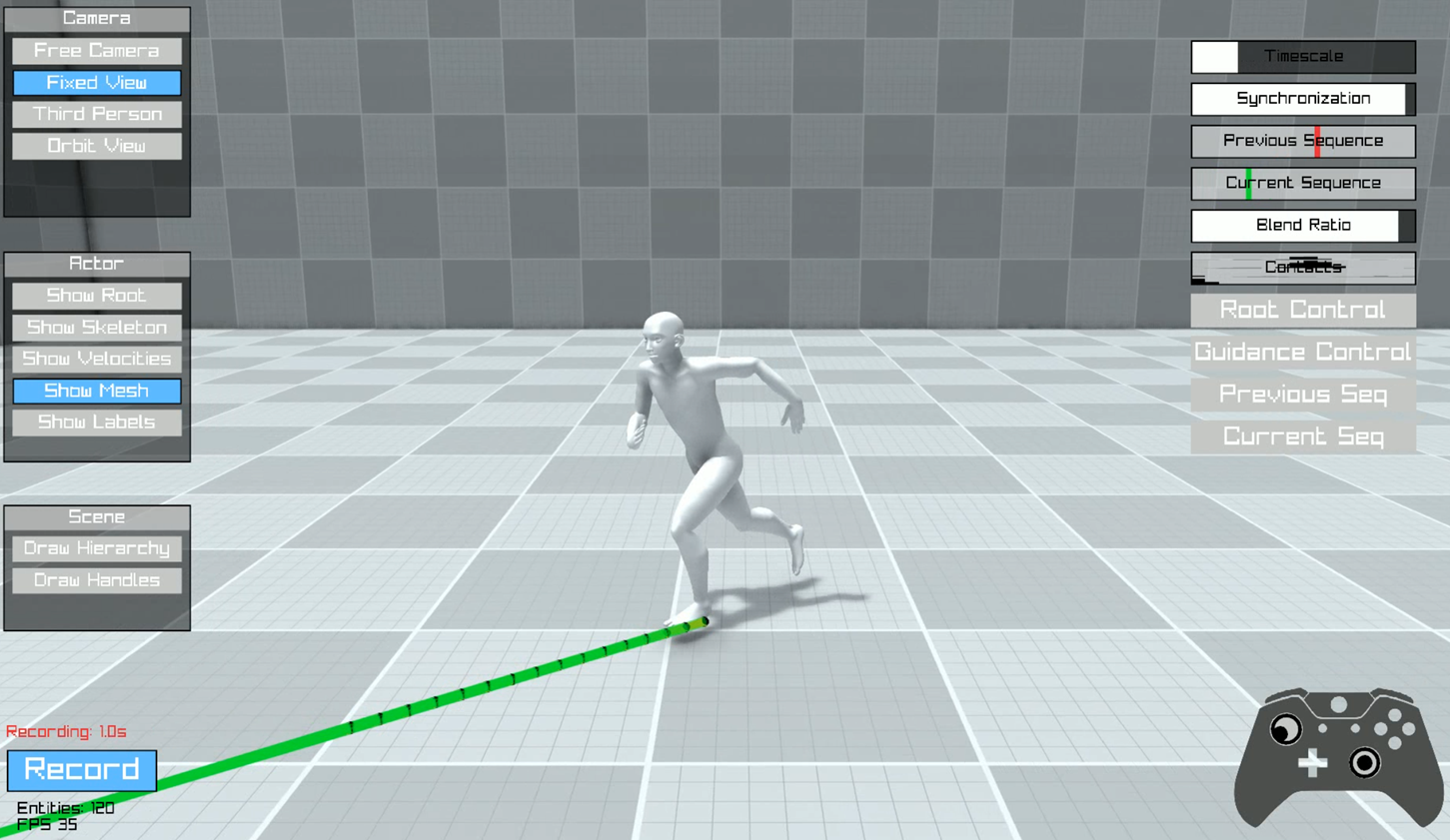

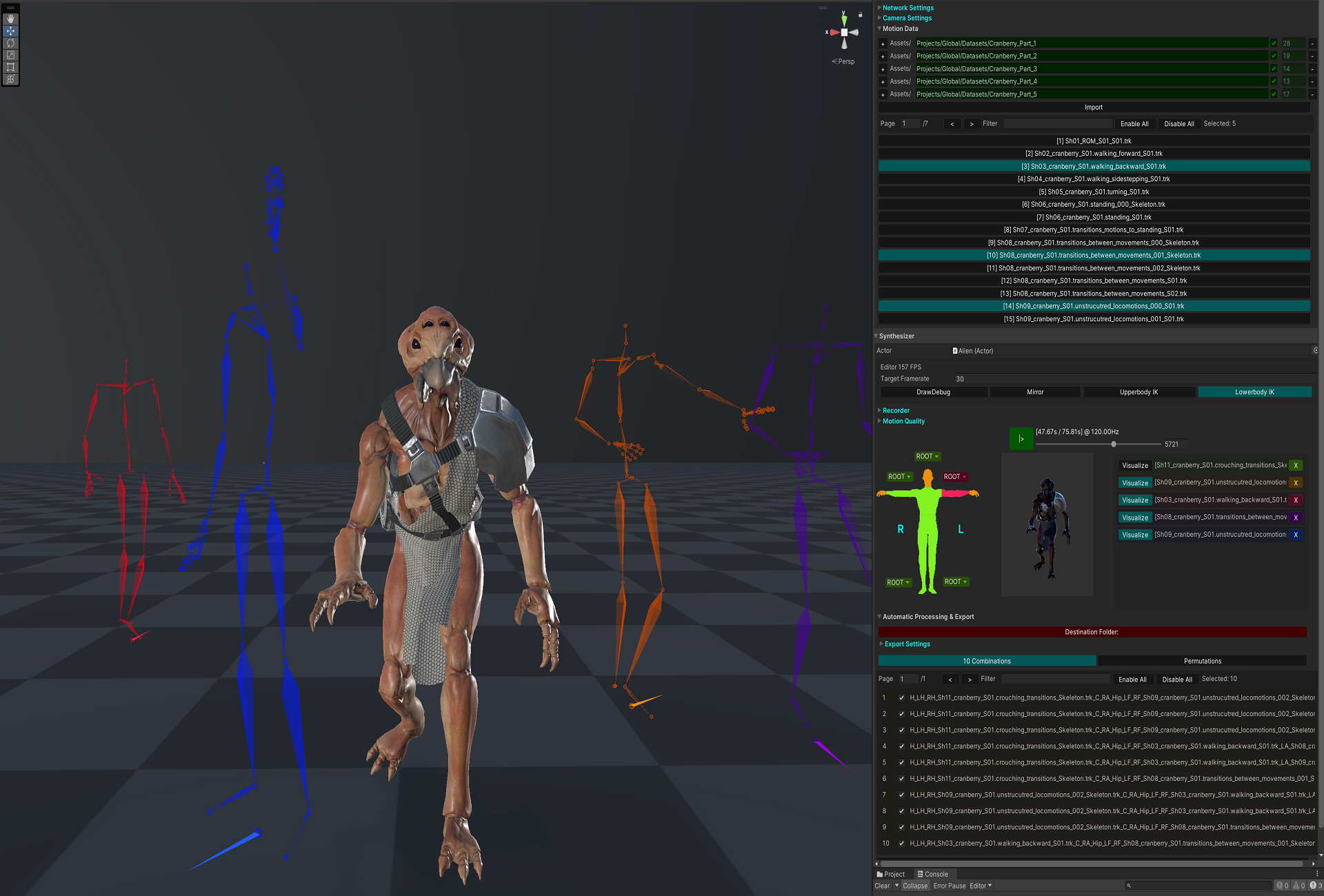

Categorical Codebook Matching for Embodied Character Controllers

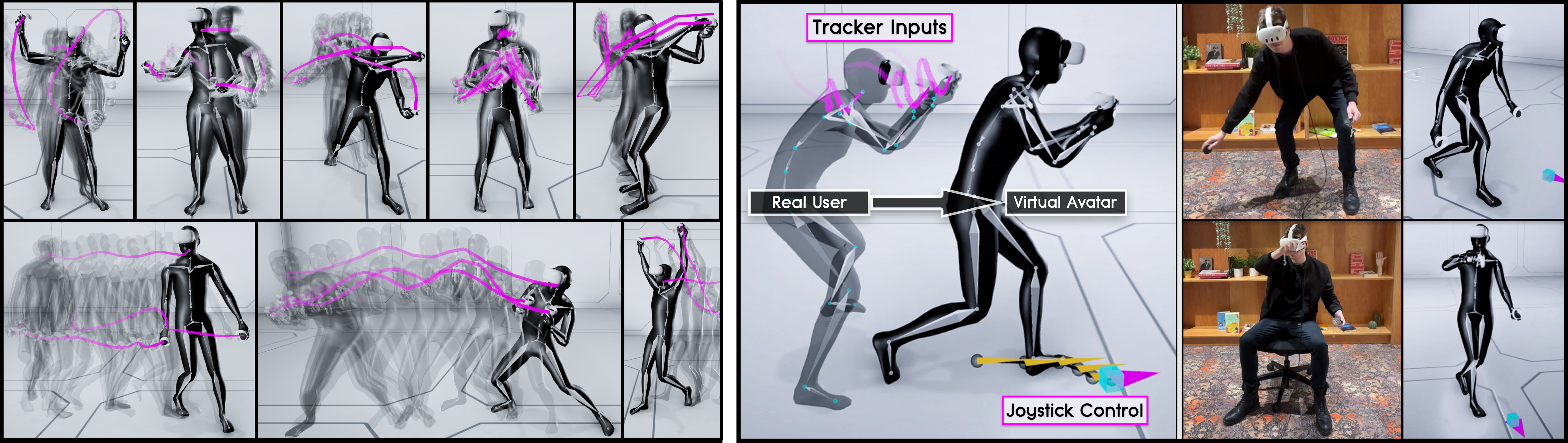

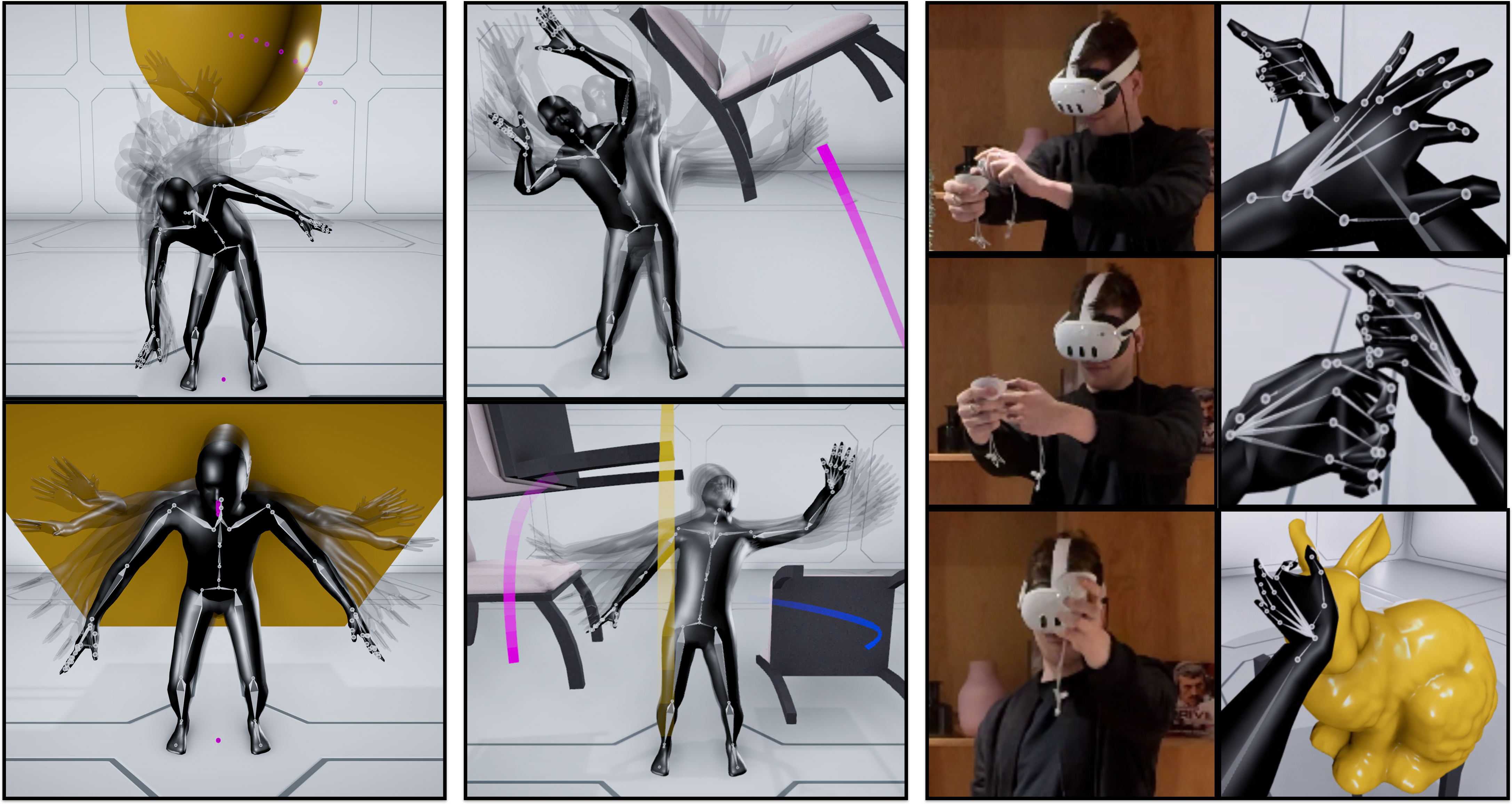

SIGGRAPH 2024Translating motions from a real user onto a virtual embodied avatar is a key challenge for character animation in the metaverse. In this work, we present a novel generative framework that enables mapping from a set of sparse sensor signals to a full body avatar motion in real-time while faithfully preserving the motion context of the user. In contrast to existing techniques that require training a motion prior and its mapping from control to motion separately, our framework is able to learn the motion manifold as well as how to sample from it at the same time in an end-to-end manner. To achieve that, we introduce a technique called codebook matching which matches the probability distribution between two categorical codebooks for the inputs and outputs for synthesizing the character motions. We demonstrate this technique can successfully handle ambiguity in motion generation and produce high quality character controllers from unstructured motion capture data. Our method is especially useful for interactive applications like virtual reality or video games where high accuracy and responsiveness are needed.

-

Unlike existing methods for kinematic character control that learn a direct mapping between inputs and outputs or utilize a motion prior that is trained on the motion data alone, our framework learns from both the inputs and outputs simultaneously to form a motion manifold that is informed about the control signals. To learn such setup in a supervised manner, we propose a technique that we call Codebook Matching which enforces similarity between both latent probability distributions Z𝑋 and Z𝑌. In the context of motion generation, instead of directly predicting the motions outputs from the control inputs, we only predict their probabilities for each of them to appear. By introducing a matching loss between both categorical probability distributions, our codebook matching technique allows to substitute Z𝑌 by Z𝑋 during test time.

-

Our method is not limited to three-point inputs but we can also use it to generate embodied character movements with additional joystick or button controls by what we call hybrid control mode. In this setting, the user, engineer or artist can additionally tell the character where to go via a simple goal location while preserving the original context of motion from three-point tracking signals. This changes the scope of applications we can address by walking / running / crouching in the virtual world while standing or even sitting in the real world. -

Furthermore, our codebook matching architecture shares many similarities with motion matching and is able to learn a similar structure in an end-to-end manner. While motion matching can bypass ambiguity in the mapping from control to motion by selecting among candidates with similar query distances, our setup selects possible outcomes from predicted probabilities and naturally projects against valid output motions if their probabilities are similar. However, in contrast to database searches, our codebook matching is able to effectively compress the motion data where same motions map to same codes, and can bypass ambiguity issues which existing learning-based methods such as standard feed-forward networks (MLP) or variational models (CVAE) may struggle with. We demonstrate such capabilities by reconstructing the ambiguous toy example functions in the figure below.

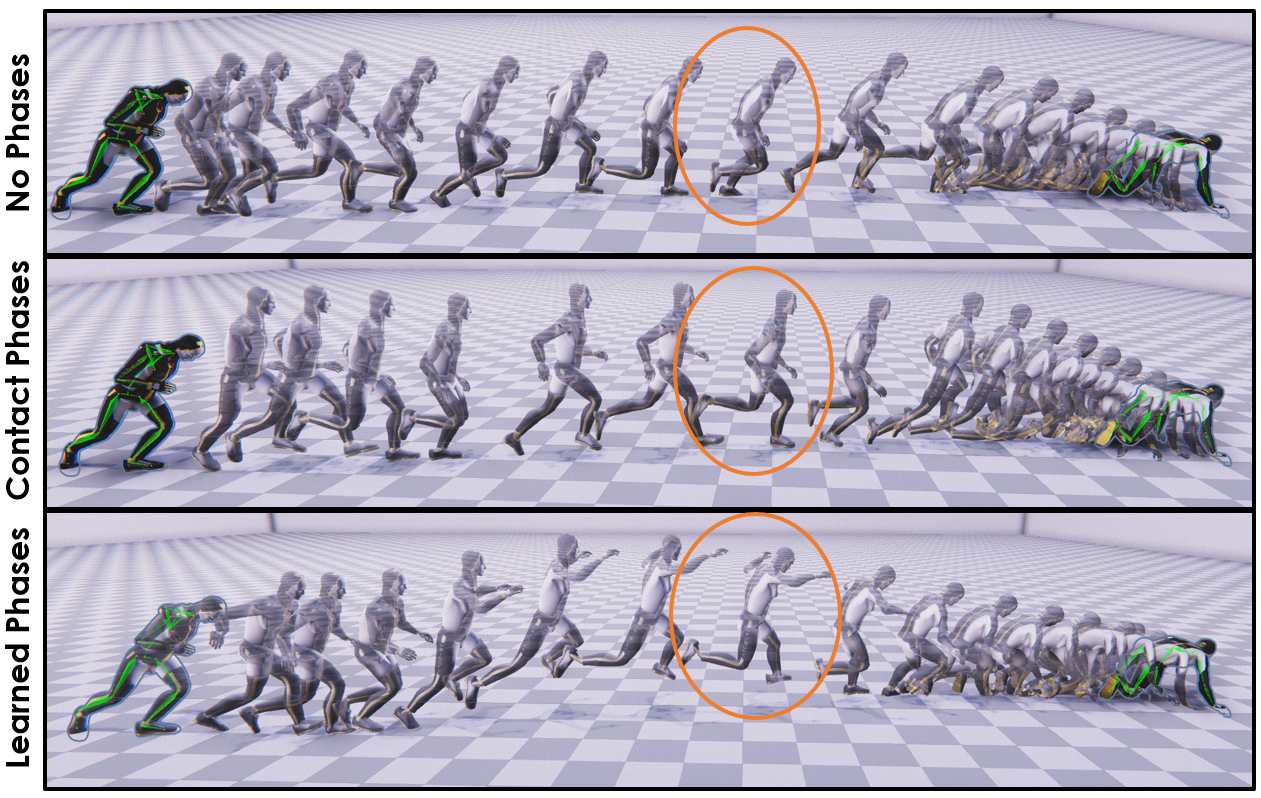

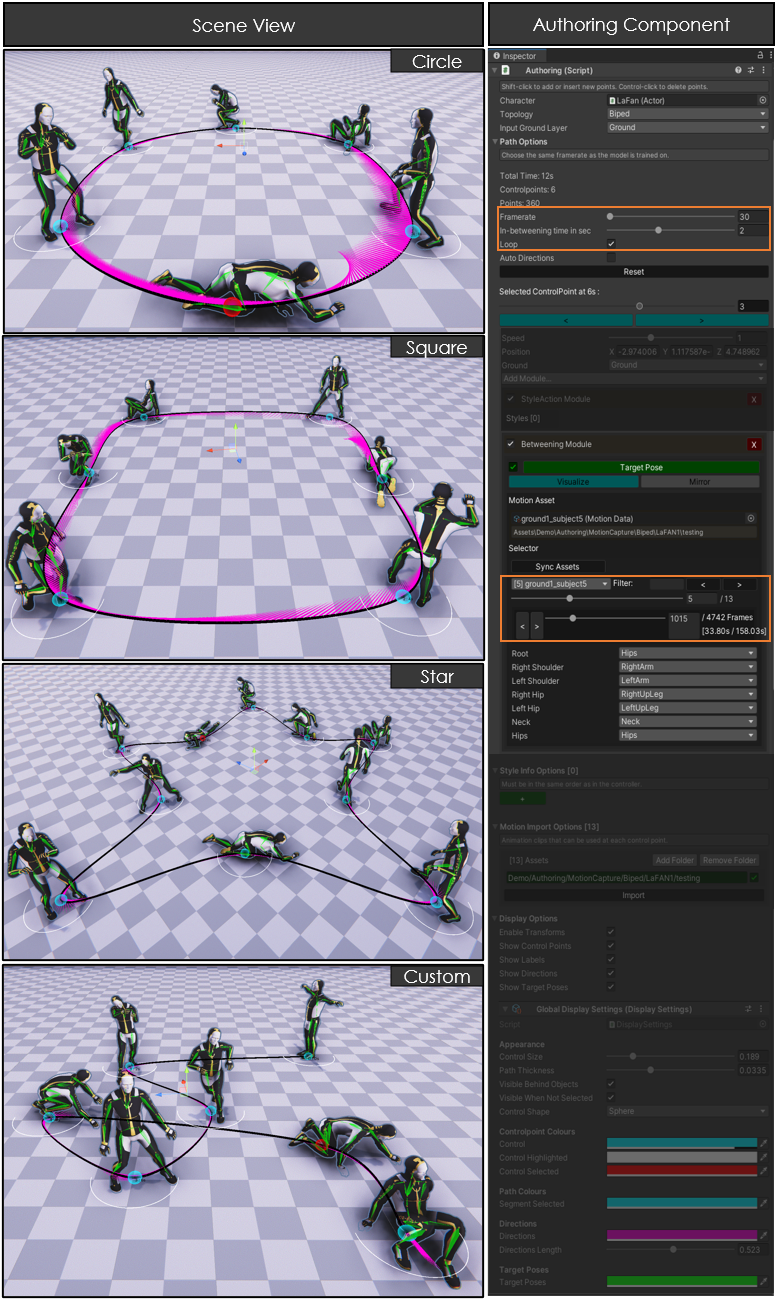

Motion In-Betweening with Phase Manifolds

SCA 2023This paper introduces a novel data-driven motion in-betweening system to reach target poses of characters by making use of phases variables learned by a Periodic Autoencoder. The approach utilizes a mixture-of-experts neural network model, in which the phases cluster movements in both space and time with different expert weights. Each generated set of weights then produces a sequence of poses in an autoregressive manner between the current and target state of the character. In addition, to satisfy poses which are manually modified by the animators or where certain end effectors serve as constraints to be reached by the animation, a learned bi-directional control scheme is implemented to satisfy such constraints. Using phases for motion in-betweening tasks sharpen the interpolated movements, and furthermore stabilizes the learning process. Moreover, more challenging movements beyond locomotion behaviors can be synthesized. Additionally, style control is enabled between given target keyframes. The framework can compete with state-of-the-art methods for motion in-betweening in terms of motion quality and generalization, especially in the existence of long transition durations. This framework contributes to faster prototyping workflows for creating animated character sequences, which is of enormous interest for the game and film industry.

Neural Offline Animation Layering

2022

This work shows the idea from the paper "Neural Animation Layering for Synthesizing Martial Arts Movements" in an easy-to-use augmentation tool that enables users without animation background to quickly generate novel motion combinations.

How does it work?

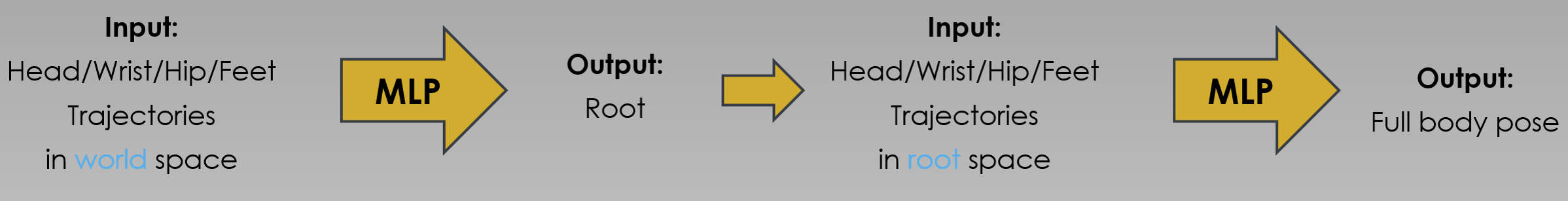

Given a set of body joint trajectories, we can synthesize a very accurate pose with a simple network architecture. In order to reconstruct the motion from these control signals, we first learn a function that maps this complete past and future series to the root transformation of our character, and after that run another function to produce the pose.

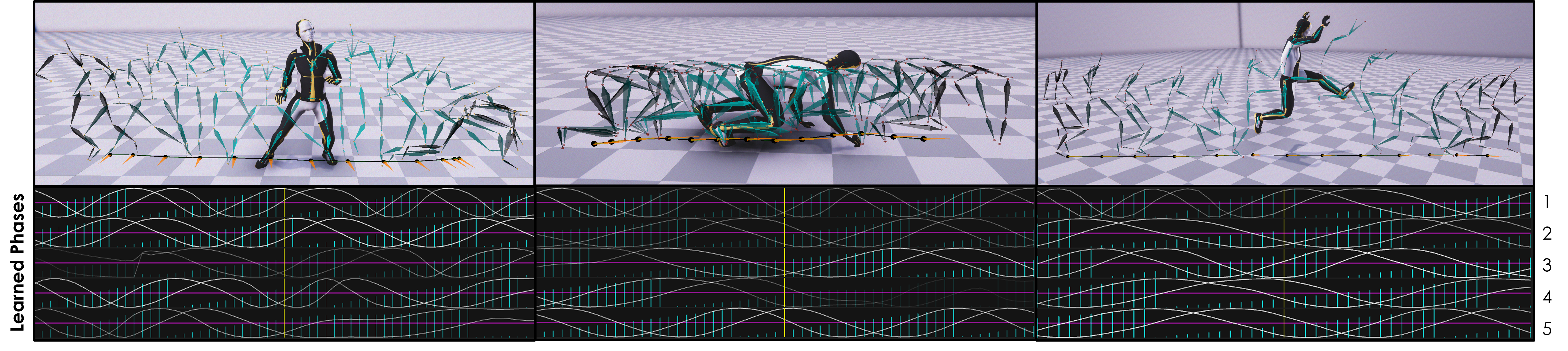

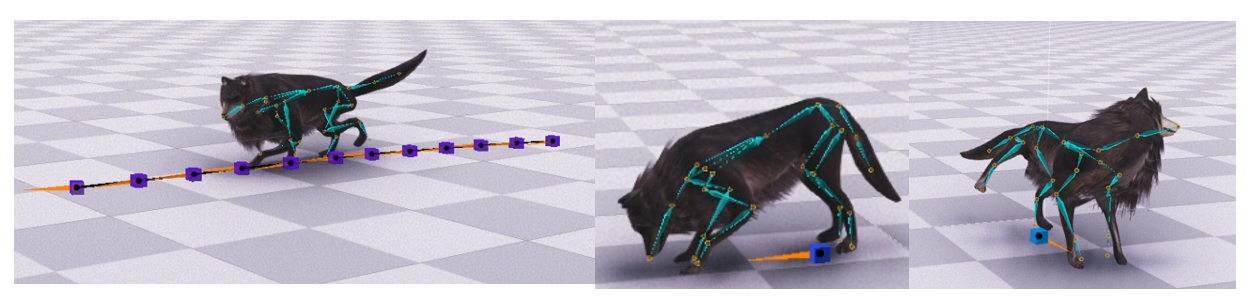

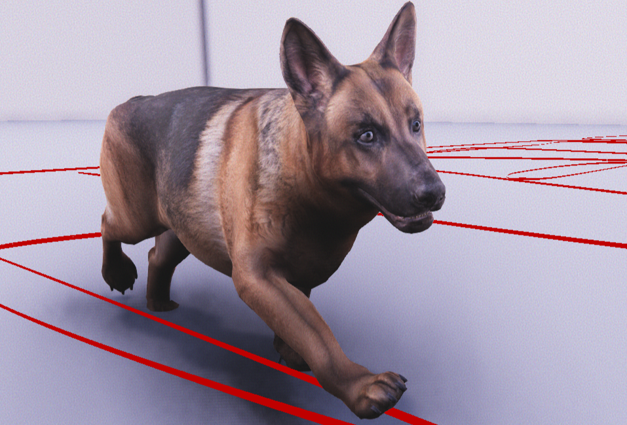

Animation Authoring for Neural Quadruped Controllers

Bachelor Thesis 2020Creating animation can be a time consuming, repetitive and difficult process. Therefore, advanced deep learning models for motion synthesis such as the Mode-Adaptive Neural Network (MANN) are able to generate realistic movements that follow high-level control signals in a plausible manner. In this work, we can generate high quality animation sequences just with a few clicks using the authoring system that learns from a large set of motion capture data and enables to generate such control signals through an intuitive user interface. To do so, the user can specify a path, desired actions at a defined time or position, and the system does not playback, but generates realistic movements that follow those control signals in a data-driven manner. Additionally, a new dataset is augmented by enhancing synthetic motions that are manipulated by inverse kinematics and then trained jointly with motion capture data. Overall, the system provides in Unity an offline control for different quadruped character movements, such as locomotion and different stylizations e.g. jumping, sitting, sneaking, eating, and hydrating.

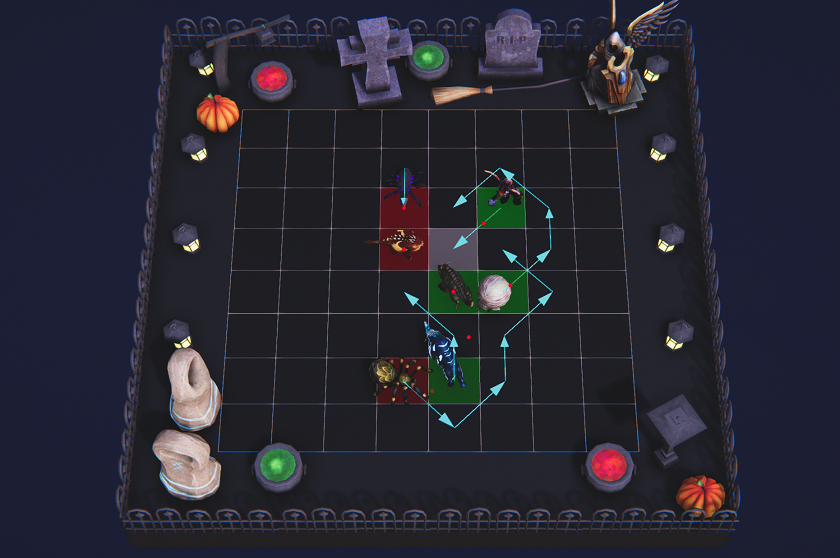

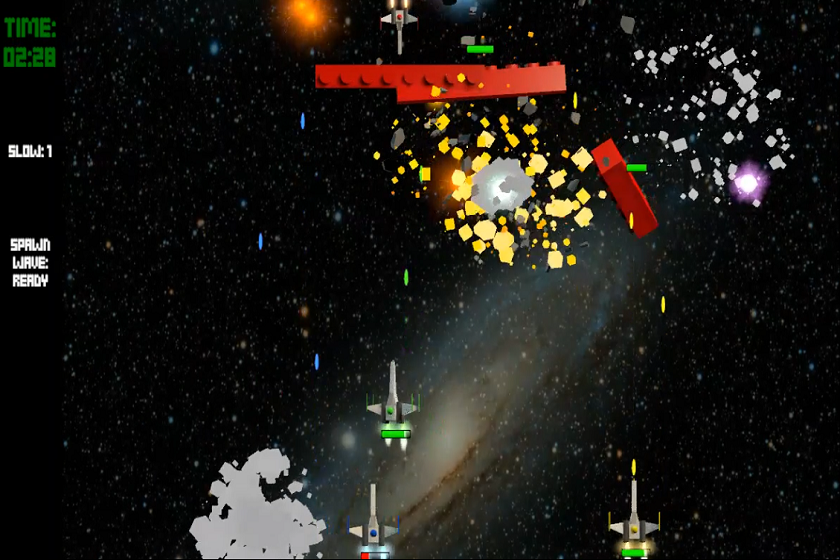

Rapid Fire- 3 vs. 1 Space Shooter Game

University Project 2021In Rapid Fire you can shoot your way through space with your friends in an arcade-lego themed designed environment. You can choose either to play alone or in a team of 3 players who aim to survive the attacks of the 4th player for a period of time. In case you are alone in front of the screen right now - dont worry! You can select an AI to control your teams or/and enemy spaceships. You will have to dodge obstacles, learn on how to use your abilities and defeat your enemies. Become more powerful by collecting power-ups and try to survive! Enjoy and remember: you will either win or lose as a team!

The minigame was part of a main game builded in the course "Interactive Game Development 2021" at the University of Hamburg and was developed using Unity3D (C#) and Blender over the course of 10 weeks. Here, you can find the trailer and GitHub repository of the main game.

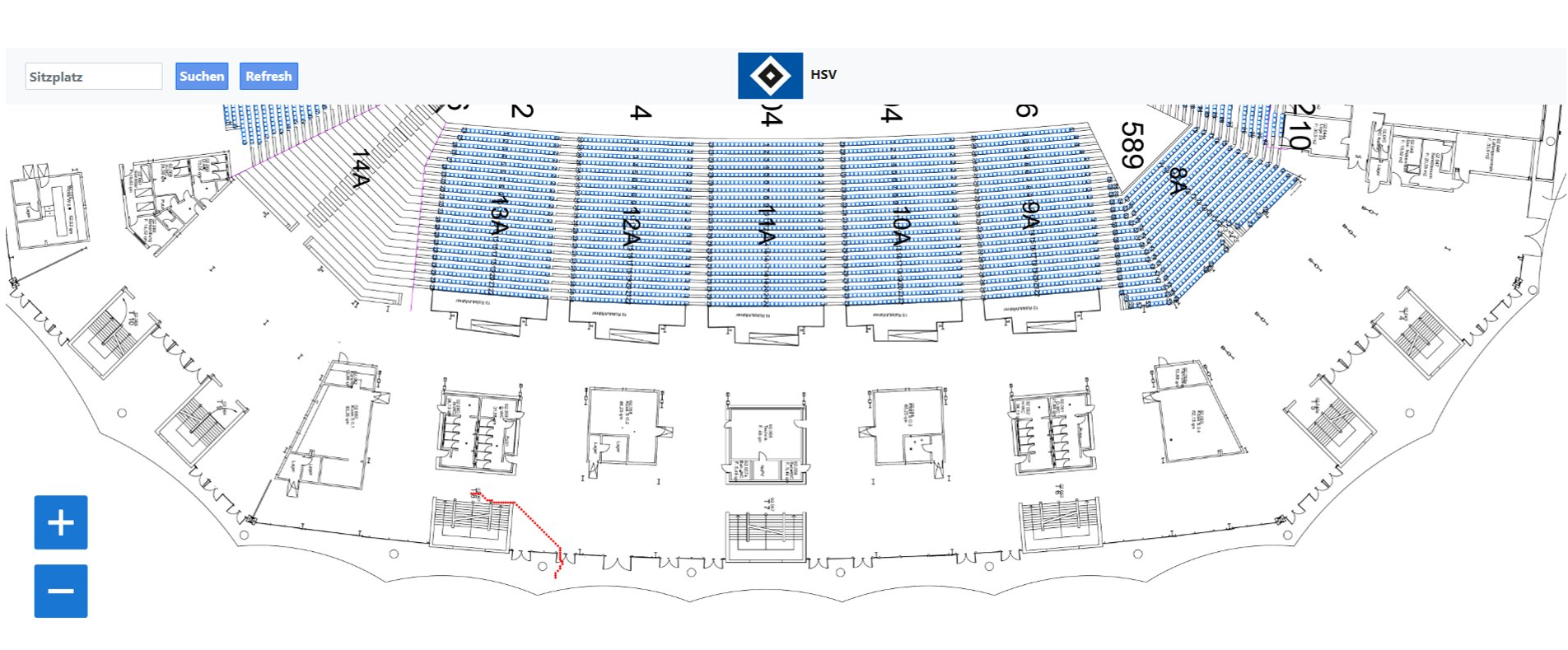

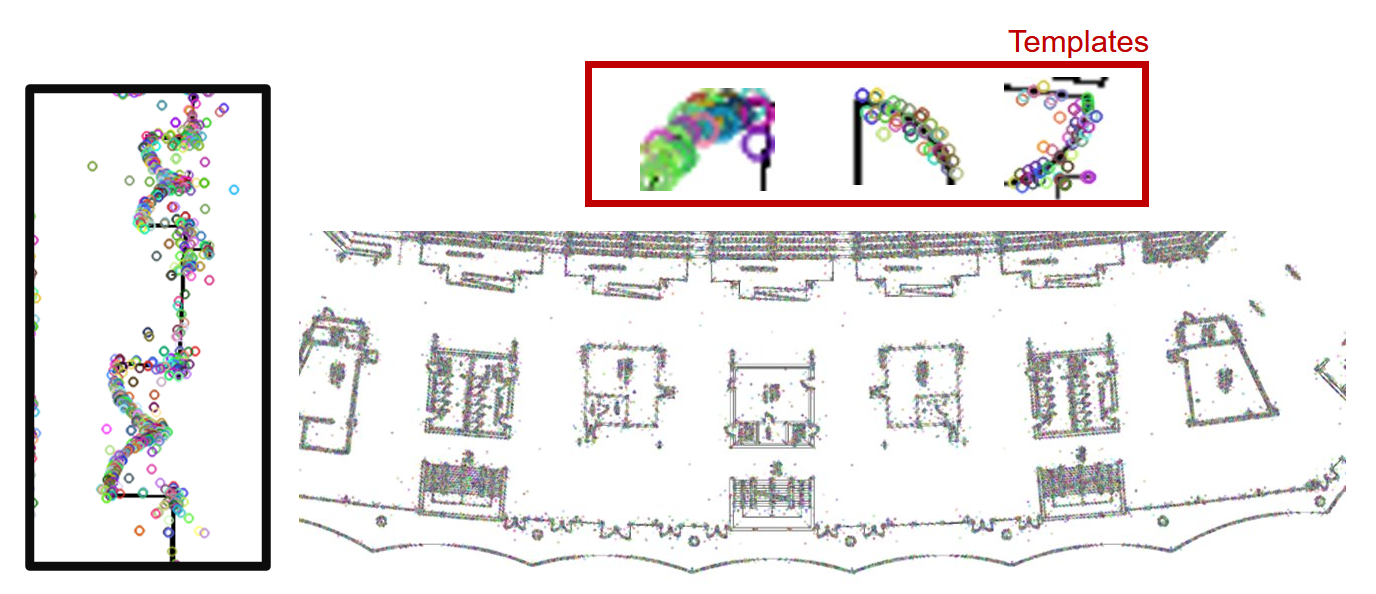

Indoor Stadion Navigation

University Project 2021In this project, an indoor navigation system was created for the Volksparkstadion of the HSV in Hamburg. It was investigated how viewers can be navigated through the stadium by using the shortest route to their seats. As a basis for this, a 3D model based on architectural plans of the stadium was created using computer vision. For this, the plans of the different levels were transformed into graphs and the contrast was increased to sharpen the structures to highlight seats, entrances, exits, and stairs. Then, doors and texts were detected using machine learning models to lay a grid over the walkable area that allows navigation with nodes and edges. The graph is constructed to be easily adaptable, to allow support for different events for which the navigation has to change as part of the stadium is not accessible for example in the case of concerts. For the application, the 3D model was connected to an interface that was developed with python and HTML. The navigation system can be used by entering the seat location and Dijkstra's algorithm calculates the shortest path which is visualized in the interface. The starting point is one of the stadium entrances, which needs to be replaced by the location of the viewer in real-world facilitation. In perspective, an extension of the application can also consider stopovers at food or merchandise counters and take care of an even distribution of visitor flows with the help of the GPS function of mobile phones to reduce waiting times and crowded corridors.

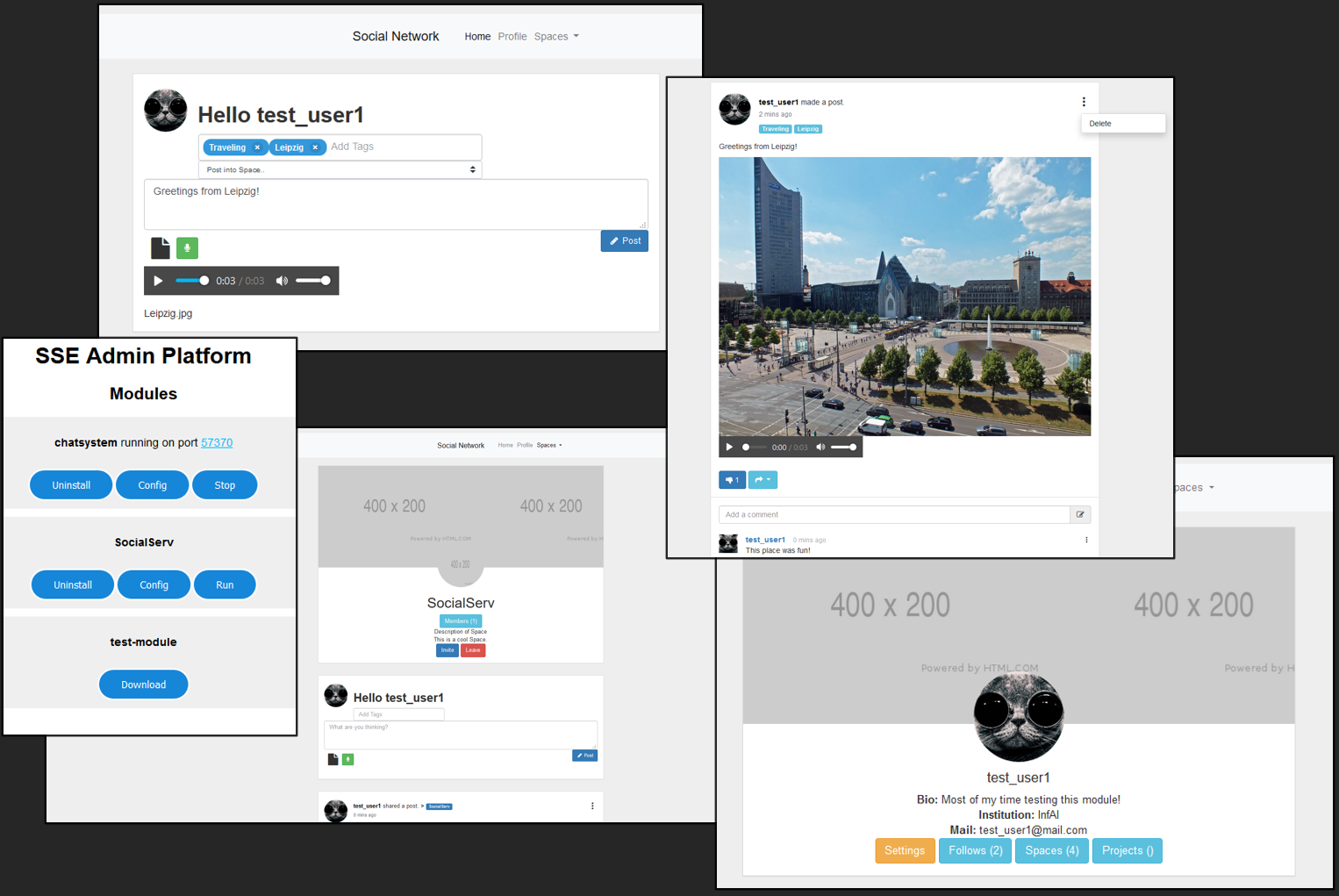

Social Network Platform

Student Project 2020In this project, a platform for various applications such as a social network and a messenger has been deployed. It is still under development, but can be used for testing purposes. All data is stored in Mongo DB. Python and Javascript is used to handle all processes. The social platform provides all standard functionality such as posting, liking, following, sharing, etc.

Game Walktrough Corpus

University Project 2019

In this project I worked as a dataset creator for The Game Walktrough Corpus (GWTC).

The dataset contains 12.295 unique walkthrough documents that cover a total of 6.117 games, which results

in two solutions per game on average.

Using Scrapy crawlers a pipeline was build to collect English and German language walkthroughs from multiple platforms.

The text content was extracted and after pre-processing converted into a generic uniform hierarchical TEI/XML markup.

Additionally, various of metadata are included in the walkthrough documents, which were gathered from RAWG and

Steam.

Please refer to the development page and published paper above for a more detailed project information, dataset description,

observations, and how to potentially use this corpus for your own research projects.